Redirect hygiene is one of those things that quietly degrades a site’s performance when left unchecked. Broken internal links, looping redirects, chains that burn crawl budget, canonicals pointing at 3xx responses: these issues compound over time, especially after migrations or large-scale content changes.

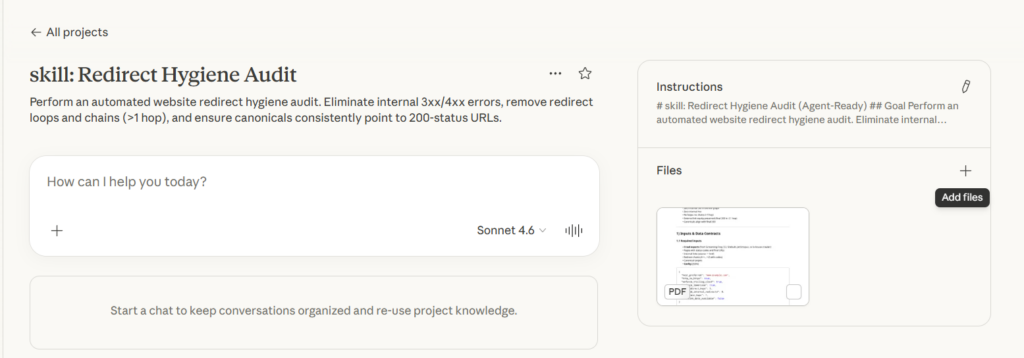

This is a Claude skill file that automates the entire redirect audit process. Drop it into a Claude Project, feed it your crawl data, and it will evaluate your site against 20 business rules, classify every violation by severity, score impact using backlinks and traffic signals, generate a prioritized task list with owners, and output server rule snippets ready for deployment.

The skill file covers everything from URL normalization and redirect graph construction to best-match mapping for retired URLs and rollback planning. It is designed to work with exports from Screaming Frog, Sitebulb, JetOctopus, or any crawler that outputs pages, internal links, redirect chains, and canonical data.

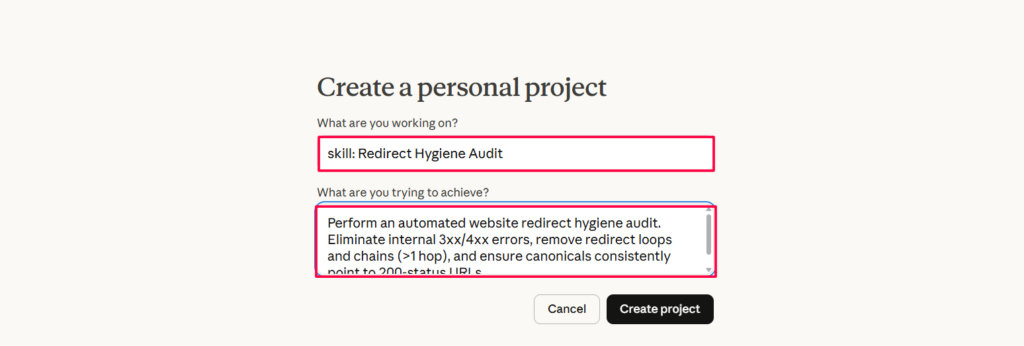

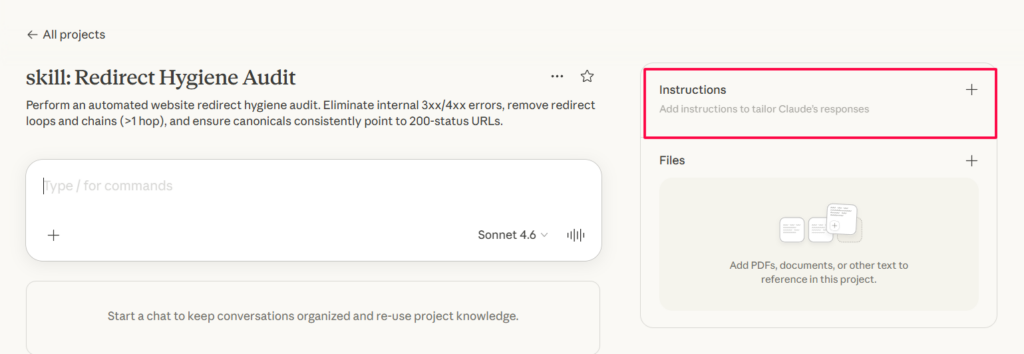

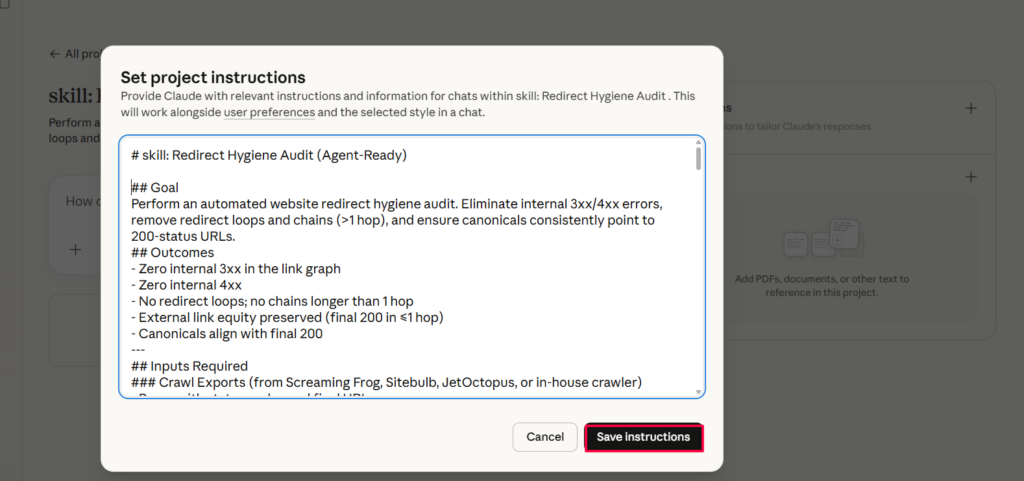

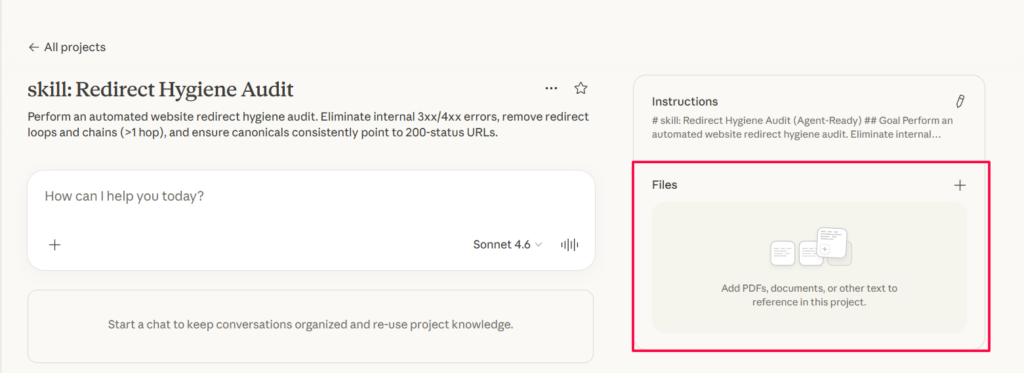

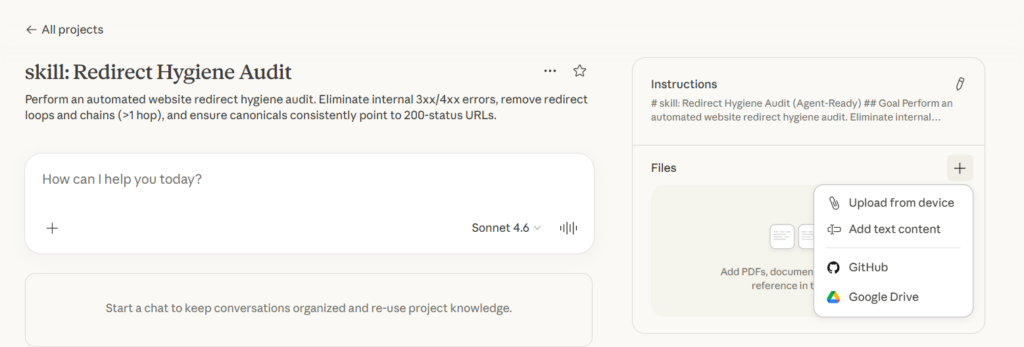

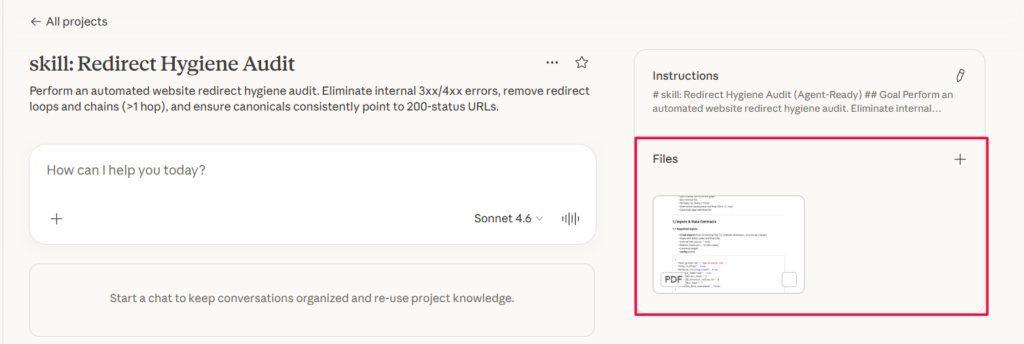

To use it, create a new Claude Project at claude.ai, add the Markdown below as a skill file under Project Knowledge, and start a conversation with your crawl data attached.

A few things to note: Claude cannot run live crawls, so you need to supply the data. Server rule output is generated as text and still needs to be deployed and tested. Backlink and analytics scoring depends on you providing that data; without it, those fields default to zero. For live integration, consider pairing this with an MCP server connected to your crawler API.

The skill file

Copy everything below and add it to your Claude project as a skill file.

skill: Redirect Hygiene Audit (Agent-Ready)

Goal

Perform an automated website redirect hygiene audit. Eliminate internal 3xx/4xx errors, remove redirect loops and chains (>1 hop), and ensure canonicals consistently point to 200-status URLs.

Outcomes

Zero internal 3xx in the link graph. Zero internal 4xx. No redirect loops and no chains longer than 1 hop. External link equity preserved with final 200 in ≤1 hop. Canonicals align with final 200.

Inputs required

Crawl exports (from Screaming Frog, Sitebulb, JetOctopus, or in-house crawler)

Pages with status codes and final URLs. Internal links (source → href). Redirect chains (A→…→Z with codes). Canonical targets.

Config (JSON)

{

"host_preferred": "www.example.com",

"http_to_https": true,

"enforce_trailing_slash": true,

"enforce_lowercase": true,

"max_redirect_hops": 5,

"allowed_internal_redirects": 0,

"max_chain_hops": 1,

"backlink_data_available": false

}Optional feeds

Backlinks (domain → target URL, RD metrics). GA4 / Search Console (page clicks/impressions). Revenue per lead or per session for impact scoring.

Business rules

| Rule | Description | Severity |

|---|---|---|

| R01 | No internal 4xx | S2 High |

| R02 | No internal 3xx in link graph | S2 High |

| R03 | Max chain hops = 1 for external requests | S2 High |

| R04 | No redirect loops/cycles | S1 Critical |

| R05 | Canonical must target a 200 final URL | S3 Medium |

| R06 | Preferred host enforced (301 → preferred) | S3 Medium |

| R07 | HTTP→HTTPS enforced | S3 Medium |

| R08 | Trailing slash policy enforced | S3 Medium |

| R09 | Lowercase policy enforced | S3 Medium |

| R10 | Backlink targets must return 200 in ≤1 hop | S2 High |

| R11 | Update internal links when target moves | S2 High |

| R12 | Homepage is NOT a catch-all redirect | S3 Medium |

| R13 | Orphan indexable pages flagged | S4 Low |

| R14 | Redirect to self disallowed | S1 Critical |

| R15 | Canonical consistency across variants | S3 Medium |

| R16 | Any hop chain > max_redirect_hops = hard fail | S1 Critical |

| R17 | No mixed canonicalization signals | S3 Medium |

| R18 | 302s older than 14 days = violation | S3 Medium |

| R19 | Keep 301s indefinitely for URLs with backlinks | S3 Medium |

| R20 | Sitemaps must list canonical 200s only | S4 Low |

Agent decision logic (step-by-step)

Step 1: Normalize

Normalize all URLs to preferred host, lowercase, and trailing-slash. Build maps: final(url), status(url), inlinks(url), canonical(url).

Step 2: Build redirect graph

Follow redirects for each URL, capped at max_redirect_hops. Record chain_id and steps. Detect cycles using a visited set.

Step 3: Detect and classify violations

4xx internal triggers R01. Internal link to 3xx triggers R02 plus creates task T02 (UpdateInternalLink). Chain length >1 for external triggers R03. Loop or cycle triggers R04 (Critical). Canonical pointing to non-200 or to a redirect triggers R05. Variant violations for host, http, slash, or case trigger R06 through R09. Backlink target returning 4xx or >1 hop triggers R10. Orphan pages that are indexable with no inlinks and not in sitemaps trigger R13. Redirect to self triggers R14. Hop chain exceeding max triggers R16. Mixed signals where canonical ≠ final triggers R17. 302s older than 14 days trigger R18. Sitemap entries with non-200 or 3xx trigger R20.

Step 4: Best-match mapping (for retired URLs)

Stop at first hit. First, check for exact slug rename in the same parent folder. Second, check for template plus taxonomy match covering the same practice area, state, or city. Third, check anchor and context similarity from inlinks and backlinks. Fourth, check title and content similarity with cosine ≥ 0.6. Fifth, try the nearest parent category page. Sixth, use the homepage as a last resort only, noting that R12 warns against this.

Always emit decision rationale into context JSON, e.g.:

{"heuristic": "taxonomy_match", "confidence": 0.82}Task types

| Task | Owner | Description |

|---|---|---|

| T01: AddRedirect | DEV | Add a 301 redirect from old to new URL |

| T02: UpdateInternalLink | VA/DEV | Update internal link source to point to new URL |

| T03: FixCanonical | DEV | Correct canonical tag to point to 200 URL |

| T04: RemoveLoop / CollapseChain | DEV | Remove loop or collapse multi-hop chain to 1 hop |

| T05: PublishOr410 | SEO/DEV | Decide to map retired page to best match or return 410 |

| T06: SitemapFix | VA/DEV | Remove non-200 / redirected URLs from sitemap |

Severity and impact scoring

Severity levels

S1 Critical covers loops (R04), key-template 4xx, and hop exceeding max (R16). S2 High covers chains >1 for external, internal 3xx (R02), and backlink 4xx (R10). S3 Medium covers canonical mismatches (R05/R17) and policy variants (R06 through R09). S4 Low covers orphans (R13) on low-value pages and sitemap nits (R20).

ImpactScore formula (0 to 1000)

40*(status_is_4xx)

+ 50*(is_loop)

+ 30*max(chain_hops-1, 0)

+ 10*internal_inlinks

+ 5*organic_clicks

+ 100*has_backlinks

+ 0.5*backlink_count

+ 5*rd_score

+ 15*canonical_mismatch

+ 5*template_priorityMissing metrics default to 0. Agent sets priority by Severity DESC, ImpactScore DESC.

Acceptance criteria (run-level)

A1: internal_4xx_count == 0. A2: internal_3xx_in_graph == 0. A3: loops == 0 and chains_collapsed_to <= 1. A4: backlink_targets_ok == 100% or explicit exceptions. A5: canonicals_point_to_200 == 100%.

Pipeline and agent roles

The Crawler (Producer) writes raw CSV/JSON to storage. The Ingestor loads data into pages, links_internal, and redirects. The Policy Evaluator applies R01 through R20 and writes policy_violations. The Mapper computes best matches and emits T01/T05. The Task Generator emits T02/T03/T04/T06 and sets priority. The Reporter produces a PDF summary, Fix Sheet, and server rule bundles. The Reviewer Agent validates deltas and marks tasks done after re-crawl.

Reporting artifacts

The Executive Summary (HTML/PDF) contains counts by rule (R01 through R20) and the top 10 impacts. The Fix Sheet (CSV) lists all tasks (T01 through T06) with owners and priority. Server Rules are Nginx/Apache rule files built from T01 plus global policy. The Delta Report shows before vs after metrics.

SLAs and SLOs

High-severity (S1/S2) tasks must be actioned within 3 business days. Re-crawl cadence is weekly for active migrations and monthly otherwise. The SLO target is to maintain A1 through A5 at ≥ 99% across runs.

Edge cases

JS-only routes should be sample rendered without blowing crawl budget. Locale and hreflang setups require that canonicals and redirects align per locale. Query parameters should be whitelisted for meaningful params with all others stripped. Pagination using rel=”next/prev” must not redirect to non-self. Files such as PDFs and images need validation for 200 status and correct Content-Type.

Rollback plan

All server rule changes are shipped behind a feature flag. Previous rules are kept for 7 days after deploy. If 5xx rates spike after deploy, the system triggers auto-rollback to the last stable artifact.